Nvidia cuda toolkit libraries11/8/2023 Learn more about the Run:AI GPU virtualization platform. Run:AI simplifies machine learning infrastructure pipelines, helping data scientists accelerate their productivity and the quality of their models. A higher level of control-Run:AI enables you to dynamically change resource allocation, ensuring each job gets the resources it needs at any given time.No more bottlenecks-you can set up guaranteed quotas of GPU resources, to avoid bottlenecks and optimize billing.Advanced visibility-create an efficient pipeline of resource sharing by pooling GPU compute resources.Here are some of the capabilities you gain when using Run:AI: With Run:AI, you can automatically run as many compute intensive experiments as needed, incorporating CUDA. Run:AI automates resource management and orchestration for machine learning infrastructure. This is to highlight how blocks can be distributed in multiple situations without code changes. Note, this diagram shows two separate GPU situations, one with four processors and one with eight. Through the runtime, the blocks are allocated to the available GPUs using streaming multiprocessors (SMs). The diagram below shows how this can work with a CUDA program defined in eight blocks. Blocks are automatically scheduled on your GPU multiprocessors by the CUDA runtime. Each block is assigned to a sub-problem or function and further breaks down the tasks to fit the available threads. You can separate applications and computations into independent functions or problems and perform them with CUDA blocks.

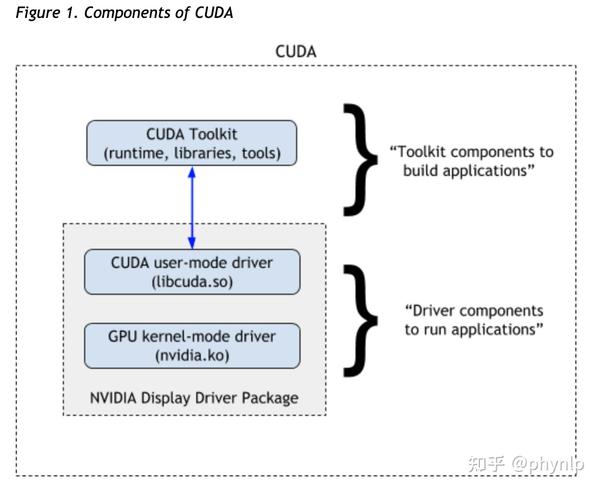

The CUDA model enables you to scale your programs transparently. _global_ void vectorAdd( float *A, float *B, float *C, int numElements) When run, it is performed in parallel with each vector element executed by a different thread in the CUDA block. This is executed on the GPU and adds the vectors as though they were scalar numbers. In the example below, you can see the CUDA kernel adding two vectors (A and B) with a third vector (C) as output. One of the main benefits of the CUDA model is that it enables you to create scalar programs. Synchronization barriers-enables multiple threads to sync at a specific completion point.Shared memory-a block of shared memory distributed across threads.CUDA blocks-a group or collection of threads.Using the CUDA programming model, you can access three main language extensions: The interface is based on C/C++, and the compiler applies abstractions to incorporate parallelism and simplify programming. CUDA Toolkit libraries support all NVIDIA GPUs.ĬUDA provides support for several popular languages, including C, C++, Fortran, Python, and MATLAB. These provide support for debugging and optimization, compiling, documentation, runtimes, signal processing, and parallel algorithms. In addition to its components for deep learning, the CUDA Toolkit includes various libraries and components. NCCL is a library for multi-node and multi-GPU communications primitives. DeepStream is a library for video inference. TensorRT is a library created by NVIDIA for high performance learning optimization and runtimes. Outside of cuDNN, there are three other main GPU-accelerated libraries for deep learning - TensorRT, NCCL, and DeepStream. This shared reliance gives the frameworks roughly the same performance for equivalent uses and means that updates to CUDA or cuDNN affect all frameworks equally.

Most of these frameworks use the cuDNN library, which supports deep neural networks. These include Torch, PyTorch, Keras, MXNet, and Caffe2.

With a traditional CPU-based system, these operations would have taken months each.Īlthough TensorFlow is one of the most popular frameworks for deep learning, many other frameworks also rely on CUDA for GPU support. They used this system to run hundreds of week-long TensorFlow operations. Without GPU systems, many deep learning models would take significantly longer to train, making them more costly and slowing innovation.įor example, when training the models for Google Translate, Google implemented a system with 2k server-grade NVIDIA GPUs. Deep learning implementations require significant computing power, like that offered by GPUs.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed